by Shelly Fan at Singularity Hub: Generative AI is a data hog. The algorithms behind chatbots like ChatGPT learn to create human-like content by scraping terabytes of online articles, Reddit posts, TikTok captions, or YouTube comments. They find intricate patterns in the text, then spit out search summaries, articles, images, and other content.

For the models to become more sophisticated, they need to capture new content. But as more people use them to generate text and then post the results online, it’s inevitable that the algorithms will start to learn from their own output, now littered across the internet. That’s a problem.

A study in Nature this week found a text-based generative AI algorithm, when heavily trained on AI-generated content, produces utter nonsense after just a few cycles of training.

“The proliferation of AI-generated content online could be devastating to the models themselves,” wrote Dr. Emily Wenger at Duke University, who was not involved in the study.

Although the study focused on text, the results could also impact multimodal AI models. These models also rely on training data scraped online to produce text, images, or videos.

As the usage of generative AI spreads, the problem will only get worse.

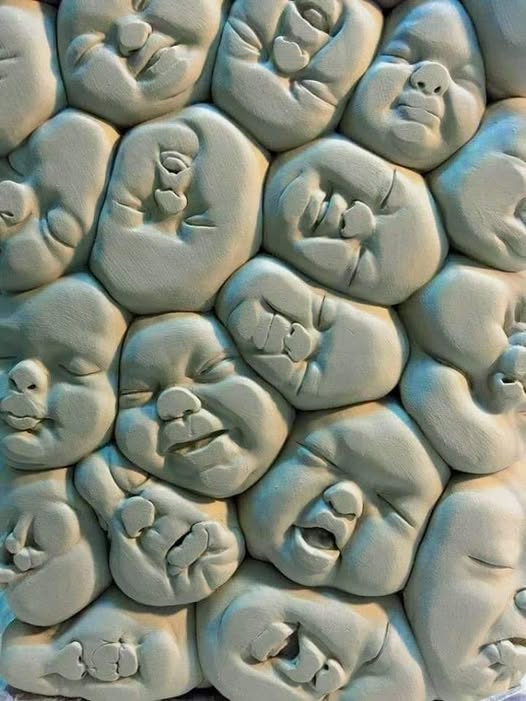

The eventual end could be model collapse, where AI increasing fed data generated by AI is overwhelmed by noise and only produces incoherent baloney.

Hallucinations or Breakdown?

It’s no secret generative AI often “hallucinates.” Given a prompt, it can spout inaccurate facts or “dream up” categorically untrue answers. Hallucinations could have serious consequences, such as a healthcare AI incorrectly, but authoritatively, identifying a scab as cancer.

Model collapse is a separate phenomenon, where AI trained on its own self-generated data degrades over generations. It’s a bit like genetic inbreeding, where offspring have a greater chance of inheriting diseases. While computer scientists have long been aware of the problem, how and why it happens for large AI models has been a mystery.

In the new study, researchers built a custom large language model and trained it on Wikipedia entries. They then fine-tuned the model nine times using datasets generated from its own output and measured the quality of the AI’s output with a so-called “perplexity score.” True to its name, the higher the score, the more bewildering the generated text.

Within just a few cycles, the AI notably deteriorated.

In one example, the team gave it a long prompt about the history of building churches—one that would make most human’s eyes glaze over. After the first two iterations, the AI spewed out a relatively coherent response discussing revival architecture, with an occasional “@” slipped in. By the fifth generation, however, the text completely shifted away from the original topic to a discussion of language translations.

The output of the ninth and final generation was laughably bizarre:

“architecture. In addition to being home to some of the world’s largest populations of black @-@ tailed jackrabbits, white @-@ tailed jackrabbits, blue @-@ tailed jackrabbits, red @-@ tailed jackrabbits, yellow @-.”

More here.