“We need to worry about the many small catastrophes that AI can bring.”

Ethan Mollick at TIME: Conversations about the future of AI are too apocalyptic. Or rather, they focus on the wrong kind of apocalypse. There is considerable concern of the future of AI, especially as a number of prominent computer scientists have raised, the risks of Artificial General Intelligence (AGI)—an AI smarter than a human being. They worry that an AGI will lead to mass unemployment or that AI will grow beyond human control—or worse (the movies Terminator and 2001 come to mind).

Discussing these concerns seems important, as does thinking about the much more mundane and immediate threats of misinformation, deep fakes, and proliferation enabled by AI. But this focus on apocalyptic events also robs most of us of our agency. AI becomes a thing we either build or don’t build, and no one outside of a few dozen Silicon Valley executives and top government officials really has any say over.

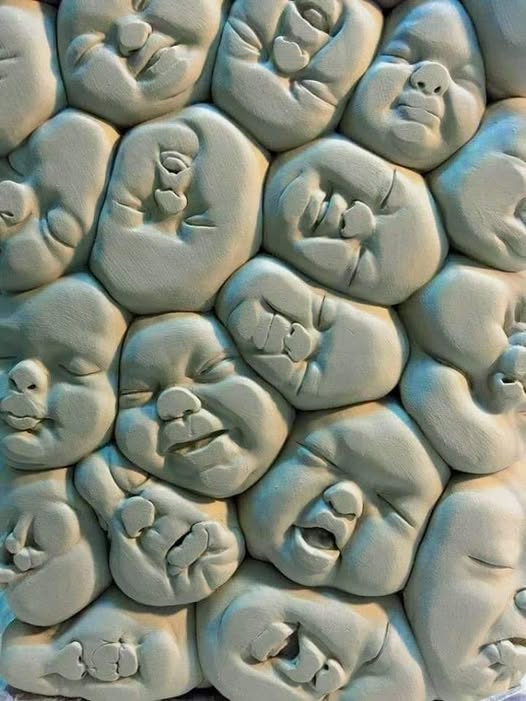

But the reality is we are already living in the early days of the AI Age, and, at every level of an organization, we need to make some very important decisions about what that actually means. Waiting to make these choices means they will be made for us. It opens us up to many little apocalypses, as jobs and workplaces are disrupted one-by-one in ways that change lives and livelihoods.

We know this is a real threat, because, regardless of any pauses in AI creation, and without any further AI development beyond what is available today, AI is going to impact how we work and learn. We know this for three reasons: First, AI really does seem to supercharge productivity in ways we have never really seen before. An early controlled study in September 2023 showed large-scale improvements at work tasks, as a result of using AI, with time savings of more than 30% and a higher quality output for those using AI. Add to that the near-immaculate test scores achieved by GPT-4, and it is obvious why AI use is already becoming common among students and workers, even if they are keeping it secret.

More here.