By Renée DiResta in Noema: You’ve probably seen it: a flock of starlings pulsing in the evening sky, swirling this way and that, feinting right, veering left. The flock gets denser, then sparser; it moves faster, then slower; it flies in a beautiful, chaotic concert, as if guided by a secret rhythm.

Biology has a word for this undulating dance: “murmuration.” In a murmuration, each bird sees, on average, the seven birds nearest it and adjusts its own behavior in response. If its nearest neighbors move left, the bird usually moves left. If they move right, the bird usually moves right. The bird does not know the flock’s ultimate destination and can make no radical change to the whole. But each of these birds’ small alterations, when occurring in rapid sequence, shift the course of the whole, creating mesmerizing patterns. We cannot quite understand it, but we are awed by it. It is a logic that emerges from — is an embodiment of — the network. The behavior is determined by the structure of the network, which shapes the behavior of the network, which shapes the structure, and so on. The stimulus — or information — passes from one organism to the next through this chain of connections.

While much is still mysterious and debated about the workings of murmurations, computational biologists and computer scientists who study them describe what is happening as “the rapid transmission of local behavioral response to neighbors.” Each animal is a node in a system of influence, with the capacity to affect the behavior of its neighbors. Scientists call this process, in which groups of disparate organisms move as a cohesive unit, collective behavior. The behavior is derived from the relationship of individual entities to each other, yet only by widening the aperture beyond individuals do we see the entirety of the dynamic.

Online Murmurations

A growing body of research suggests that human behavior on social media — coordinated activism, information cascades, harassment mobs — bears striking similarity to this kind of so-called “emergent behavior” in nature: occasions when organisms like birds or fish or ants act as a cohesive unit, without hierarchical direction from a designated leader. How that local response is transmitted — how one bird follows another, how I retweet you and you retweet me — is also determined by the structure of the network. For birds, signals along the network are passed from eyes or ears to brains pre-wired at birth with the accumulated wisdom of the millenia. For humans, signals are passed from screen to screen, news feed to news feed, along an artificial superstructure designed by humans but increasingly mediated by at-times-unpredictable algorithms. It is curation algorithms, for example, that choose what content or users appear in your feed; the algorithm determines the seven birds, and you react.

Our social media flocks first formed in the mid ‘00s, as the internet provided a new topology of human connection. At first, we ported our real, geographically constrained social graphs to nascent online social networks. Dunbar’s Number held — we had maybe 150 friends, probably fewer, and we saw and commented on their posts. However, it quickly became a point of pride to have thousands of friends, then thousands of followers (a term that conveys directional influence in its very tone). The friend or follower count was prominently displayed on a user’s profile, and a high number became a heuristic for assessing popularity or importance. “Friend” became a verb; we friended not only our friends, but our acquaintances, their friends, their friends’ acquaintances.

The virtual world was unconstrained by the limits of physical space or human cognition, but it was anchored to commercial incentives. Once people had exhaustively connected with their real-world friend networks, the platforms were financially incentivized to help them find whole new flocks in order to maximize the time they spent engaged on site. Time on site meant a user was available to be served more ads; activity on site enabled the gathering of more data, the better to infer a user’s preferences in order to serve them just the right content — and the right ads. People You May Know recommendation algorithms nudged us into particular social structures, doing what MIT network researcher Sinan Aral calls the “closing of triangles:” suggesting that two people with a mutual friend in common should be connected themselves.

Eventually, even this friend-of-friending was tapped out, and the platforms began to create friendships for us out of whole cloth, based on a combination of avowed, and then inferred, interests. They created and aggressively promoted Groups, algorithmically recommending that users join particular online communities based on a perception of statistical similarity to other users already active within them.

This practice, called collaborative filtering, combined with the increasing algorithmic curation of our ranked feeds to usher in a new era. Similarity to other users became a key determinant in positioning each of us within networks that ultimately determined what we saw and who we spoke to. These foundational nudges, borne of commercial incentives, had significant unintended consequences at the margins that increasingly appear to contribute to perennial social upheaval.

One notable example in the United States is the rise of the QAnon movement over the past few years. In 2015, recommendation engines had already begun to connect people interested in just about any conspiracy theory — anti-vaccine interests, chemtrails, flat earth — to each other, creating a sort of inadvertent conspiracy correlation matrix that cross-pollinated members of distinct alternate universes. A new conspiracy theory, Pizzagate, emerged during the 2016 presidential campaign, as online sleuths combed through a GRU hack of the Clinton campaign’s emails and decided that a Satanic pedophile cabal was holding children in the basement of a DC pizza parlor. More here.

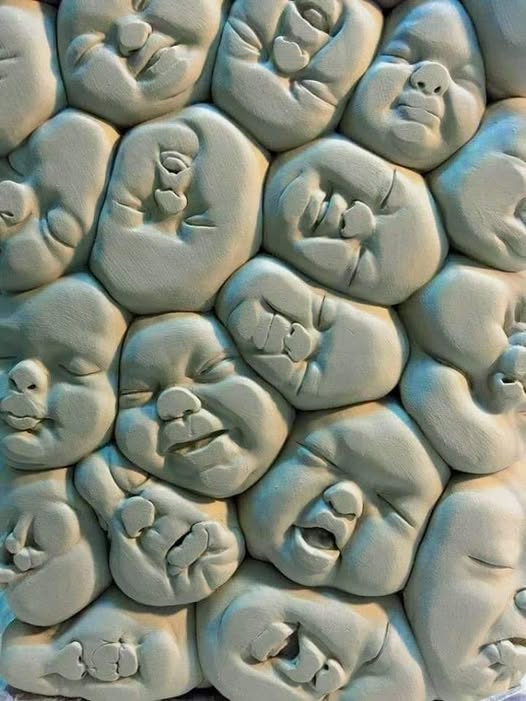

FEATURED IMAGE